Coordinated Inauthentic Behavior (CIB)

What is coordinated inauthentic behavior?

Coordinated inauthentic behavior (CIB) happens when bad guys create fake social media accounts and coordinate posts to manipulate public debate. They mislead people about who they are and what they’re doing.

How does coordinated inauthentic behavior happen?

Bad actors either create bot networks with fake accounts, create a network of automated fake accounts, assemble a network of people, or do some combination of the three.

They then push out the same content at the same time en masse, to either push a message, change the conversation, or drive outrage on an issue.

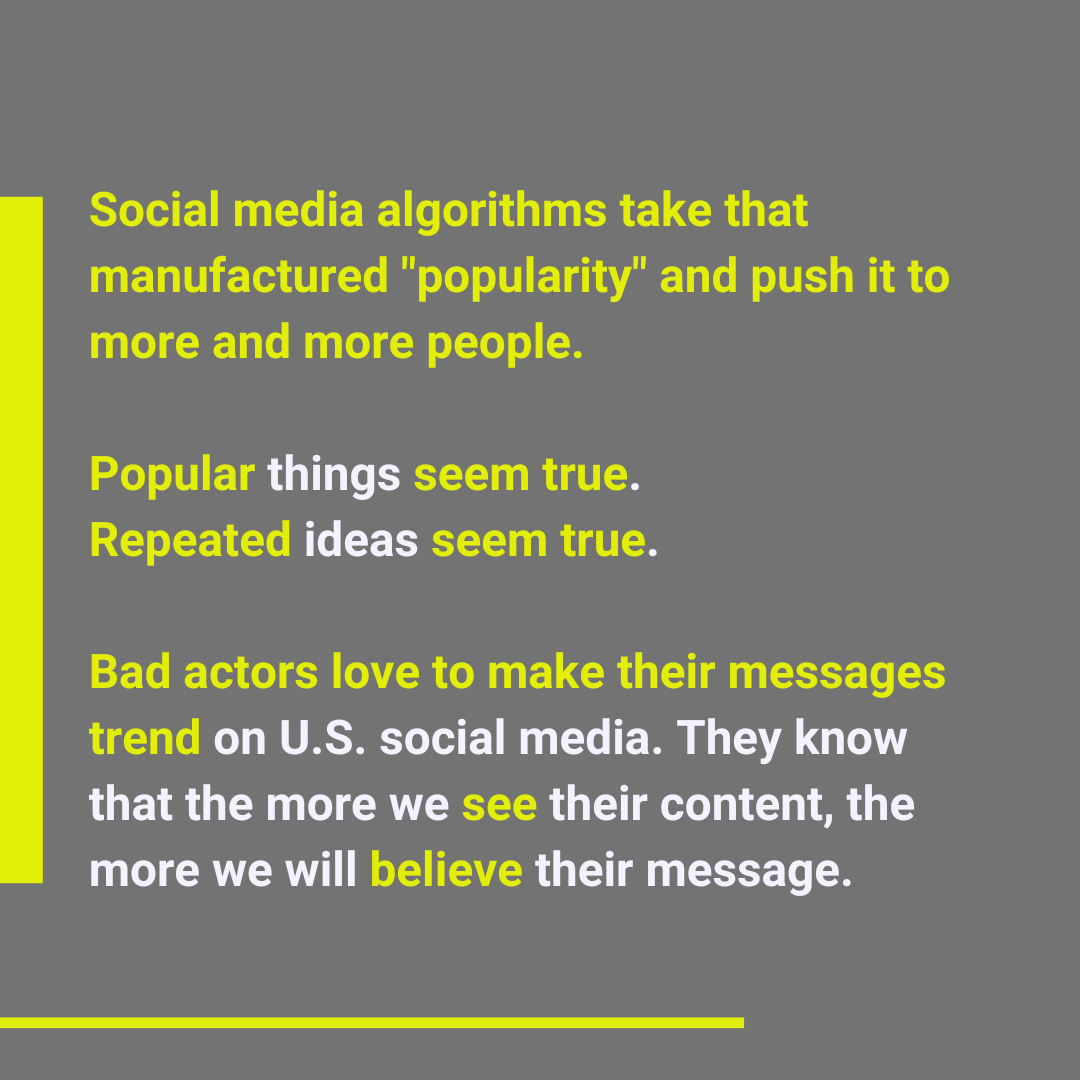

The response to this activity – either through engagement and arguing in the comments, likes, and shares – then causes the algorithms to think the content is popular, further pushing reach.

What does coordinated inauthentic behavior look like?

Disinfluencers do this in a ton of ways.

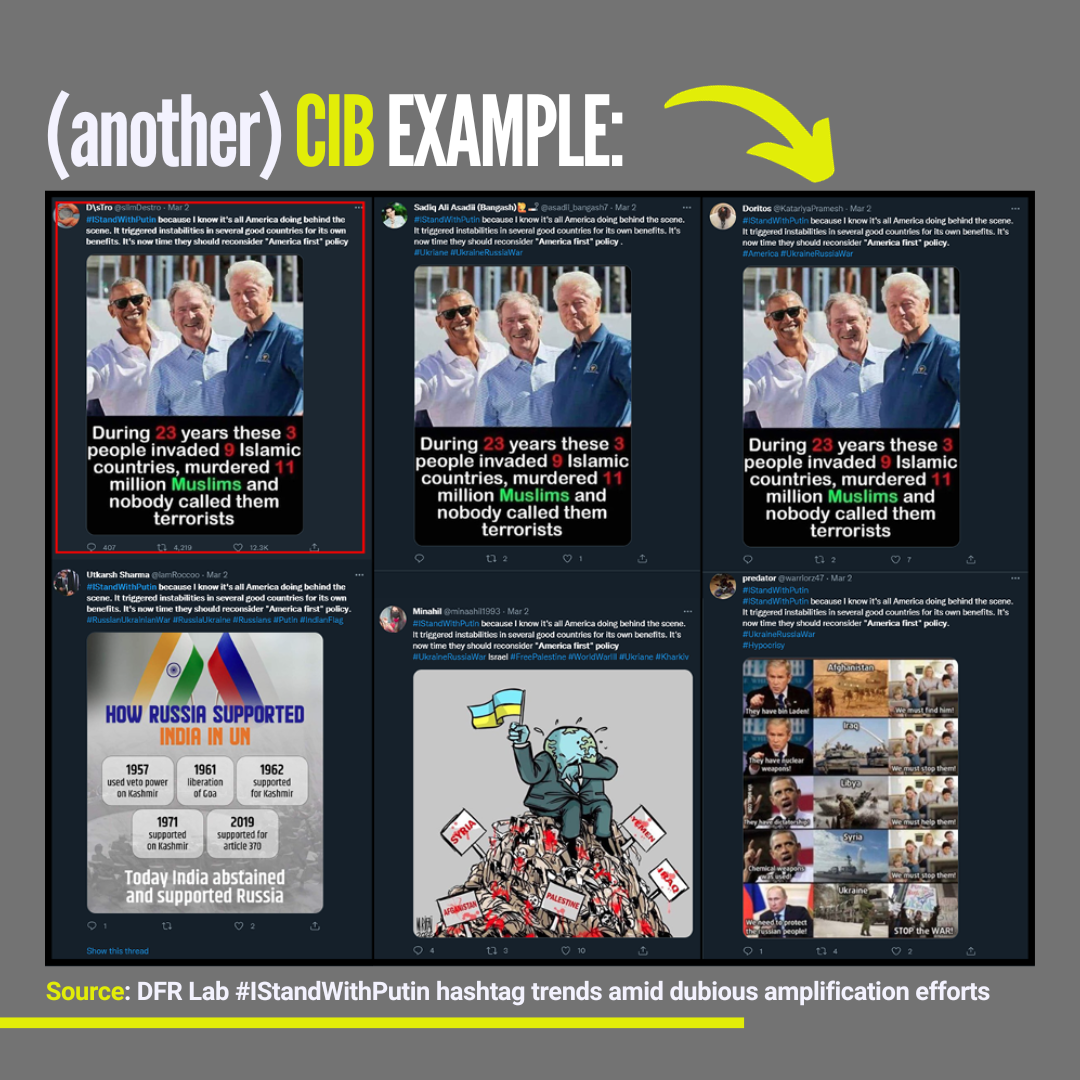

Sometimes they want to make a topic seem super popular, like the hundreds of Twitter accounts retweeting #IStandWithPutin after Russia invaded Ukraine. That hashtag actually trended for a while before accounts were removed. But even several days later, there were lots of posts still using that hashtag.

Some are definitely bots, but a lot of them came from troll farms that are set up like call centers. And social media companies struggle to find and remove all of them.

Even worse, sometimes the messages get even more attention from people who disagree. #IStandWithPutin started trending a second time after people started adding it to critical posts or rage farming responses. One researcher said those tweets were some of the most engaged. Twitter says that they find about 130,000 accounts each day that try to manipulate trending topics.

Other times their goal is to harass individuals or companies. In December 2021, Facebook took down a network of Italian and France-based anti-vax accounts targeting medical professionals, journalists, and public officials. They were mass-commenting on posts with memes and intimidating language to try and suppress the posters’ views.

In another case, a Vietnamese network was removed after submitting thousands of complaints against activists they were trying to silence. Those attackers also created duplicate accounts of some of those users, then turned around and submitted hundreds of complaints against the real accounts.

Sometimes, the bad guys just make people up, like a fake biologist critical of the U.S. Once “he” started posting, Chinese state media started reporting on “his” statements, and the posts were engaged with by high-level government officials. Facebook removed the fake biologist’s account and called it “the work of a multi-pronged, largely unsuccessful influence operation” involving “employees of Chinese state infrastructure companies across four continents.”

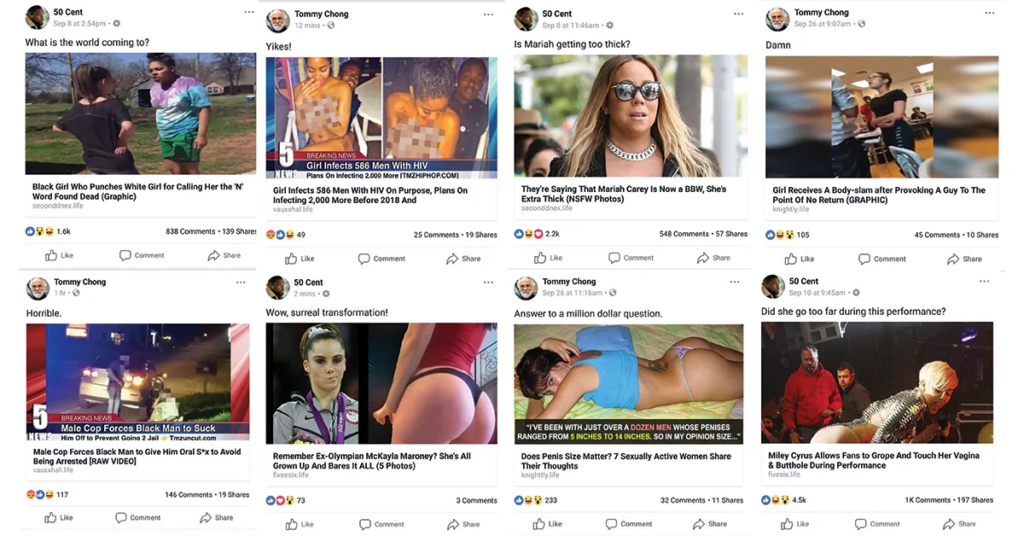

Another example is a bastardized version of influencer marketing. Bad guys are using verified celebrity accounts to post clickbait. Clickbait sites use clicks to generate ad revenue, similar to influencer marketing. Facebook users who “like” celebrity pages are shown tons of spam and fake news, which, if clicked, go to a Facebook banned website.

All of these are to achieve one simple goal: make things seem more popular than they really are by posting a ton of content. When we see things a lot, they seem popular. That gives them the illusion of truth. Even when we think we know better, we can still fall victim to what’s called the Illusory Truth Effect.

Imagine it in terms of Covid-19: For years we’ve been told that an increase in vitamin C and zinc can help prevent colds. So we stock up on these vitamins to try and avoid Covid as well, even if we are told by medical experts that they won’t prevent us from getting it. That’s what the Illusory Truth Effect is. We may be skeptical the first time we hear something, but if we hear it over and over we start to feel like it’s true. Even if we know better deep down that it’s nonsense.

Social media outlets recognize that CIB is a big problem. Meta, the parent company of Facebook and Instagram puts out a monthly CIB report on the bad behavior they find. Twitter releases details twice a year and TikTok reports findings quarterly.

But the problem is that a lot of us don’t even know what it means, what to look for, or how to report it. Worse, sometimes they do know about this stuff and choose to do nothing if the accounts are popular enough.

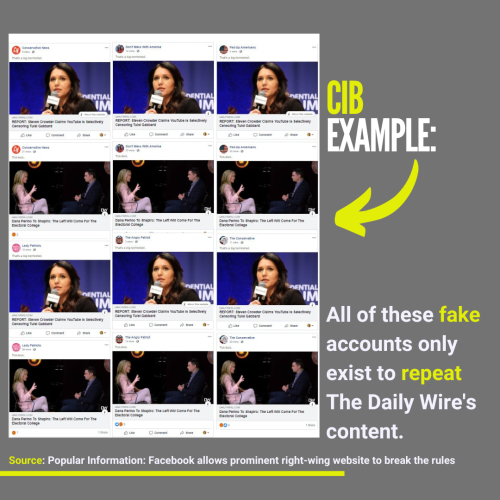

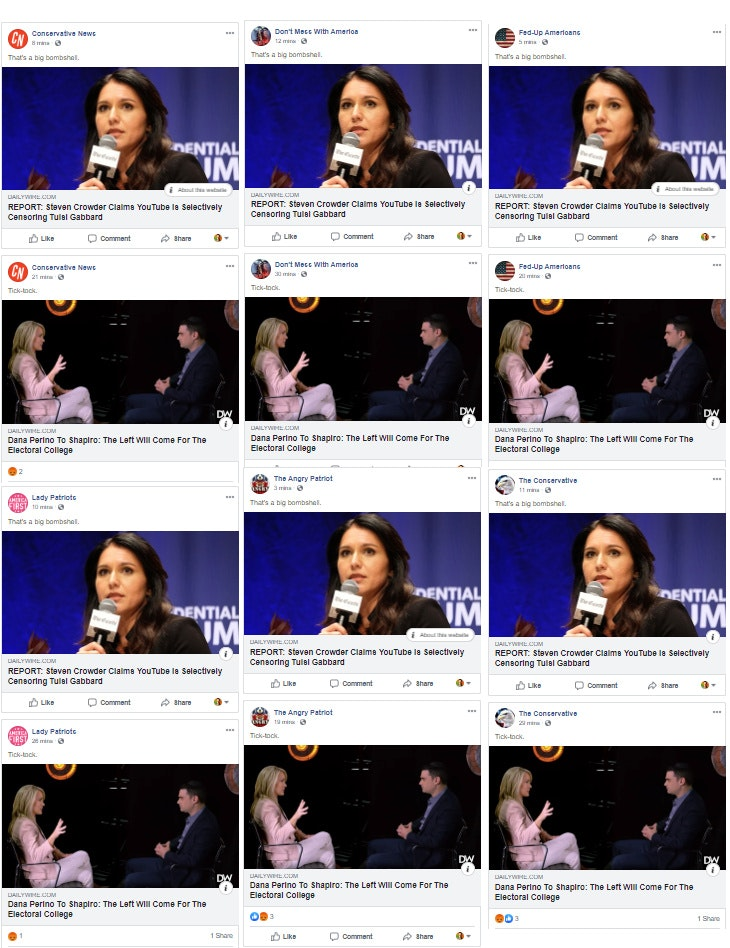

Take for example right-wing provocateur Ben Shapiro’s fake news and conspiracy theory-heavy website The Daily Wire.

They have a massive Facebook following. It’s made even more popular because of a network of (at least) 14 large Facebook pages that exist just to promote content from The Daily Wire. None reveal their connection to the controversial website. Some even pretend to be independent media outlets.

This is in direct violation of Facebook’s community standards: “the use of Facebook or Instagram assets (accounts, Pages, Groups, or Events), to mislead people or Facebook…about the identity, purpose, or origin of the entity that they represent.”

Yet, The Daily Wire continues this trick even though they’ve been reported.

What should we do about coordinated inauthentic behavior?

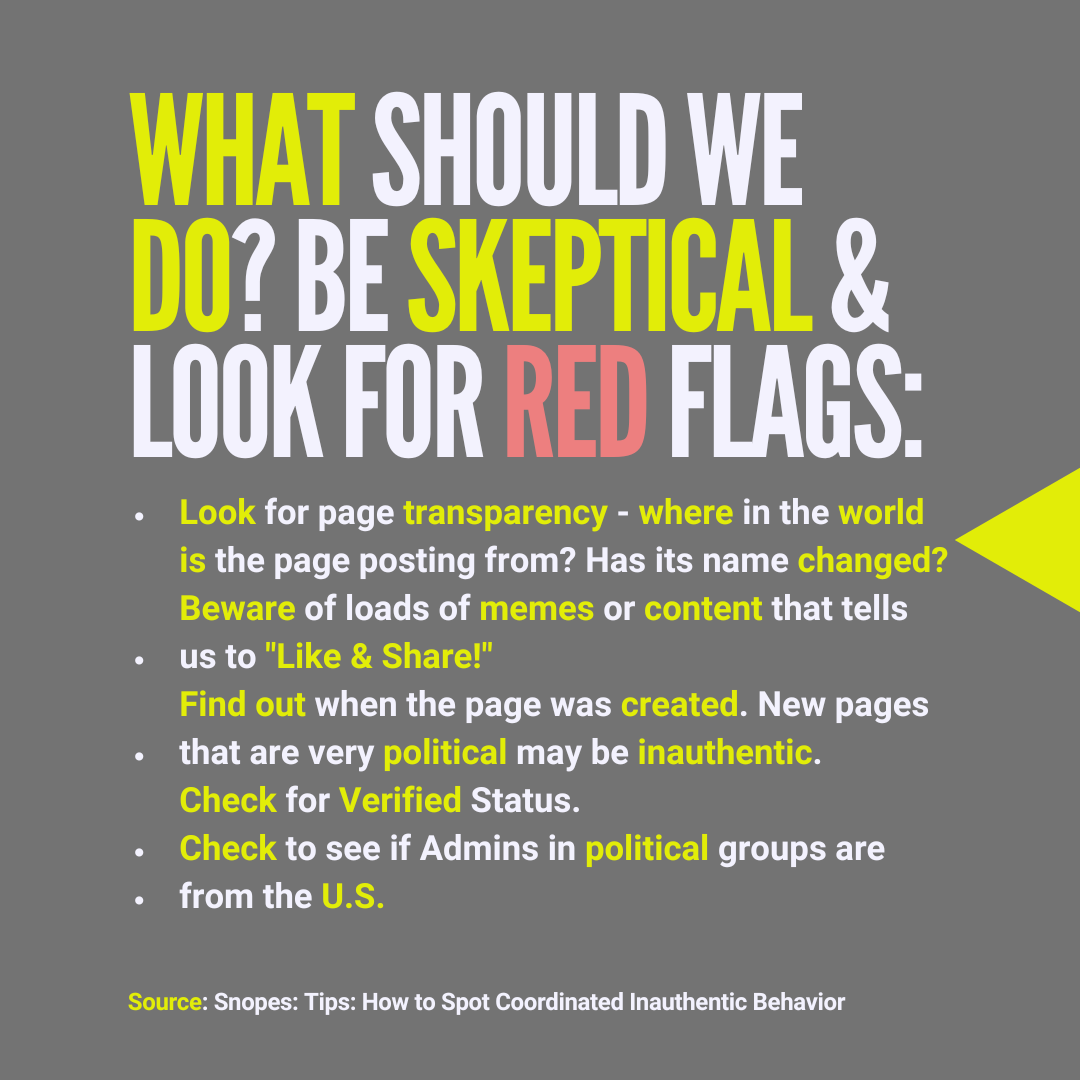

It’s tough to know when we’re seeing something that falls into the CIB category. Being skeptical about any information online that is not from a source we recognize is always smart.

Beyond that, Snopes lists 5 things to look for when we’re online:

- Check page transparency: Find where in the world Facebook pages are posting from and if they’ve had any name changes.

- Be skeptical of “Like and Share!”: If a page has endless memes telling us to “like and share,” they may be part of an inauthentic network.

- Check the page creation date: Look at the About or Page Transparency page to find out when the page was created. If it’s new, features U.S. politics, and appears not to be run from inside the U.S., that may be a red flag.

- Check to see if a page is verified: If a page is verified, it likely represents what it claims to represent.

- Check who the admins are: Check to see who the admins are in Facebook groups. If political groups are foreign-run, that can be a clue it’s not legit.

Slate: What Does “Coordinated Inauthentic Behavior” Actually Mean?

Snopes: Snopestionary: What Is ‘Coordinated Inauthentic Behavior’?

Snopes: Misinfluencers, Inc.: How Fake News Is Reaching Millions Using Verified Facebook Accounts

Snopes: A Collection of Coordinated Inauthentic Behavior

Engadget: Facebook details its takedown of a mass-harassment network

Twitter: Platform Manipulation

Twitter: Platform manipulation and spam policy

Twitter: Update: Russian interference in the 2016 US presidential election

Facebook: January 2022 Coordinated Inauthentic Behavior Report

Facebook: Inauthentic Behavior

TikTok: Community Guidelines Enforcement Report

TikTok: Community Guidelines

Media Matters: Twitter has allowed one user to manipulate the platform by coordinating over 40 hashtags, seemingly in violation of policy

The Decision Lab: Why do we believe misinformation more easily when it’s repeated many times?

NBC News: Twitter bans over 100 accounts that pushed #IStandWithPutin

Medium: #IStandWithPutin hashtag trends amid dubious amplification efforts

POLITICO: Social media platforms on the defensive as Russian-based disinformation about Ukraine spreads

Twitter (@sanusi90064): tweet published at 7:32 AM · Mar 7, 2022

Business Insider: Twitter bans more than 100 accounts using the hashtag #IStandWithPutin for violating the platform's 'manipulation and spam policy'

Popular Information: Facebook allows prominent right-wing website to break the rules

Wired: What Even Is ‘Coordinated Inauthentic Behavior’ on Platforms?